Stop Measuring AI ROI. You're Chasing the Wrong Number.

The same publications declaring AI a failure today declared cloud computing a failure in 2010. They were wrong then too.

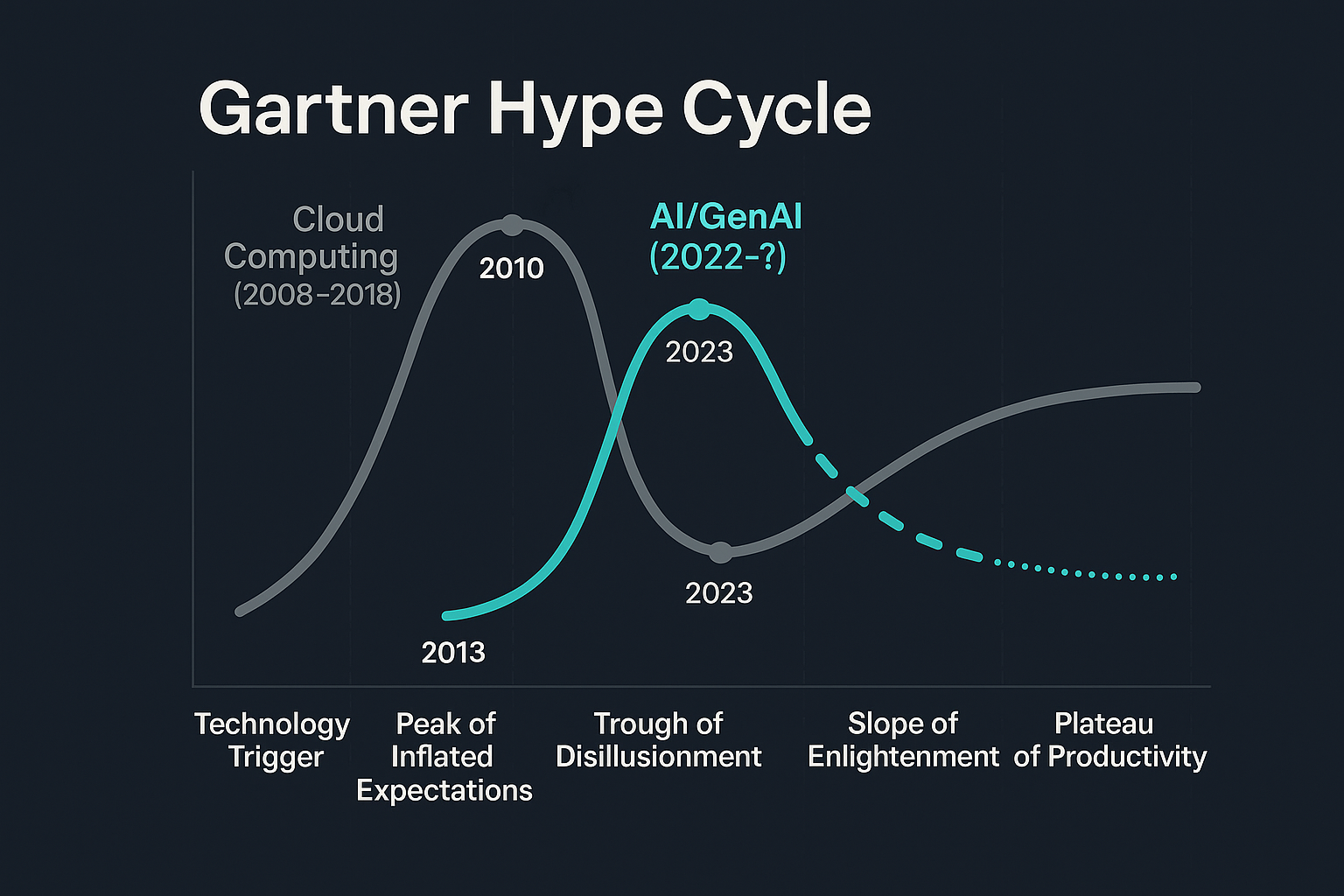

In 2010, Gartner placed cloud computing at the Peak of Inflated Expectations and sent it tumbling into the Trough of Disillusionment [6]. It stayed there for roughly four years. InfoWorld ran a headline that year: “It’s official: Cloud computing is now meaningless” [7]. Computerworld published “Top 5 disappointments as cloud computing enters the Trough of Disillusionment” [8]. Forbes asked in 2013: “Cloud Computing’s ROI Increasingly Elusive” [9]. By 2012, Gartner itself was questioning whether ROI was even the right measure of cloud success [10].

Today, 94% of enterprises use cloud computing [18]. The Trough of Disillusionment wasn’t a graveyard. It was a speed bump.

Sound familiar?

The AI Failure Narrative

Today’s headlines hit the same notes. MIT reports that 95% of enterprise GenAI pilots fail to deliver profits or cost cuts [1]. S&P Global found that 42% of companies abandoned most AI initiatives in 2025, up from just 17% in 2024 [2]. Gartner predicts 30% of GenAI proofs of concept will be abandoned by the end of 2025 due to unclear business value [3]. NTT DATA reports that 70-85% of GenAI enterprise deployments fail to meet desired ROI [5].

The narrative is clear: AI is failing. The investments aren’t paying off. Companies are pulling the plug.

But here’s what nobody is asking: What if we’re measuring the wrong thing?

We’ve Been Here Before

In 1987, Nobel economist Robert Solow made an observation that became known as the Solow Paradox: “You can see the computer age everywhere but in the productivity statistics” [11]. Computers were spreading through offices and factories. Everyone could see the change. But the economic data showed nothing. No productivity gains. No GDP impact. Nothing.

Solow was right. For about 20 years.

IT investments from the 1970s and 1980s only delivered productivity gains in the late 1990s [12]. The technology had to mature. Organizations had to learn how to use it. Workflows had to adapt. And then, suddenly, the productivity boom arrived. The investments paid off. Just not on the timeline anyone expected.

Economists are already resurrecting the Solow Paradox to explain AI’s current measurement failure [13]. The pattern is the same. The technology is everywhere. The productivity statistics show nothing. And everyone is panicking.

But here’s what’s different this time: the clock is moving faster than it ever has.

The Acceleration Nobody Is Talking About

Every major technology follows the same adoption curve. What’s changed is how quickly each wave moves through it.

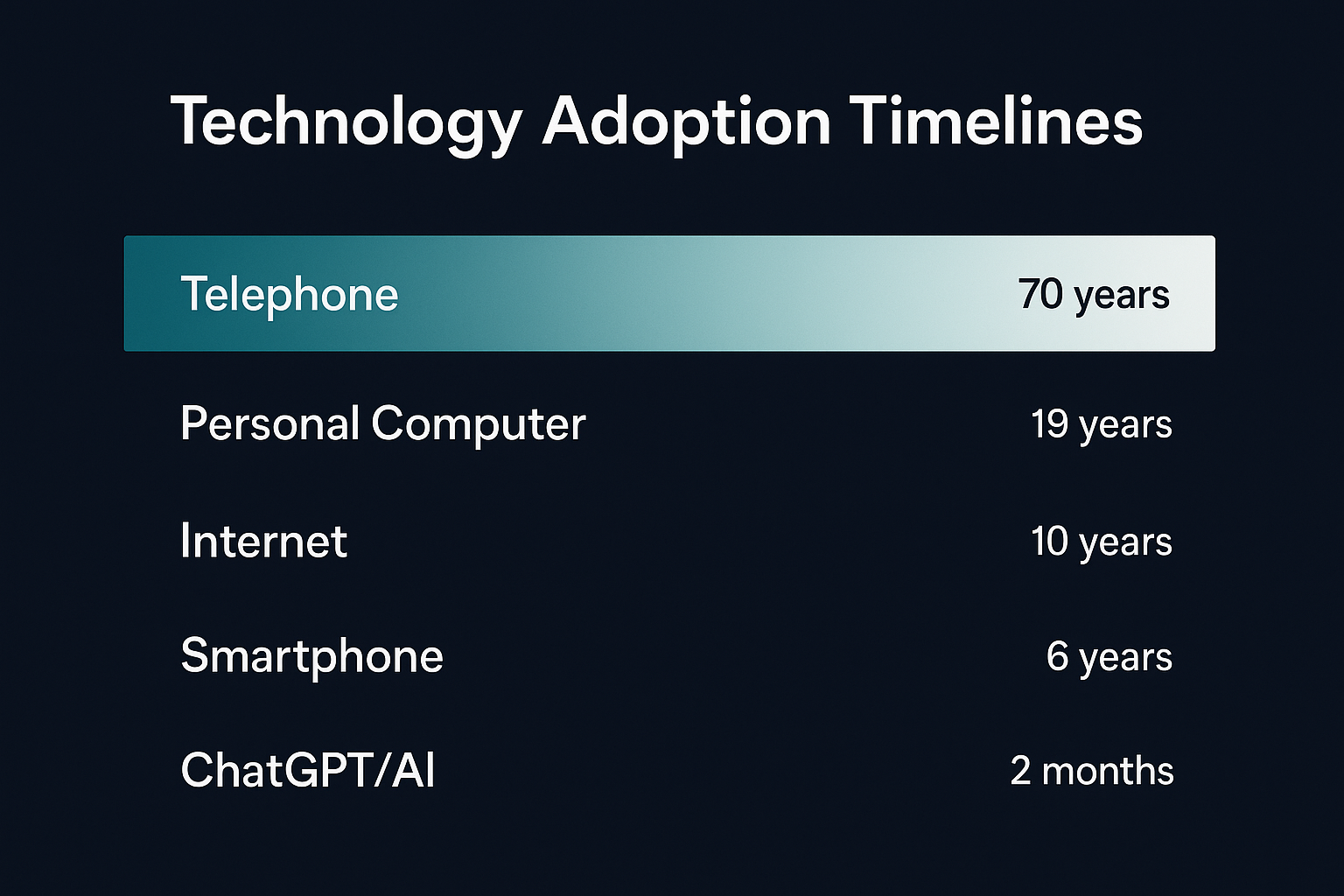

The telephone took 70 years to reach 50% of households. The personal computer took 19 years. The internet took 10. The smartphone took 6. ChatGPT reached 100 million users in two months [19].

Read that sequence again. Each cycle compresses by roughly half. The window to build capability before the market shifts isn’t measured in decades anymore. It’s measured in years, maybe less.

This has a name. It’s the compounding effect of infrastructure: each wave builds on everything that came before it. Cloud computing needed the internet. The internet needed personal computers. AI needs all of it, and all of it already exists. The infrastructure is in place. The distribution channels are built. The workforce is digitally fluent. There is less friction between invention and adoption than at any point in human history.

If you’re waiting for clear ROI metrics before investing in AI capability, you’re running the same playbook that worked when you had decades to decide. You don’t have decades. You might not have years.

Why ROI Is the Wrong Metric

UC Berkeley put it bluntly in September 2025: “The obsession with traditional ROI reflects the same flawed thinking that has plagued every major tech transformation” [14]. We’re trying to count the candles we stopped buying instead of measuring what electricity made possible.

Harvard Business Review found that what actually derails AI isn’t the technology. It’s fear, rigid workflows, and power structures [15]. Moderna CEO Stephane Bancel said it directly: “The biggest challenge is a change management challenge” [16].

The problem isn’t that AI doesn’t work. The problem is that we’re measuring deployment when we should be measuring adoption. We’re running quarterly experiments on multi-year transformations. We’re designing pilots to fail.

IBM’s CEO study found that 79% of CEOs perceive AI gains in their organizations, but only 29% can actually measure ROI [4]. That 50-point gap isn’t a measurement failure. It’s a reality check. The gains are real. The measurement frameworks are broken.

Four Patterns That Guarantee Failure

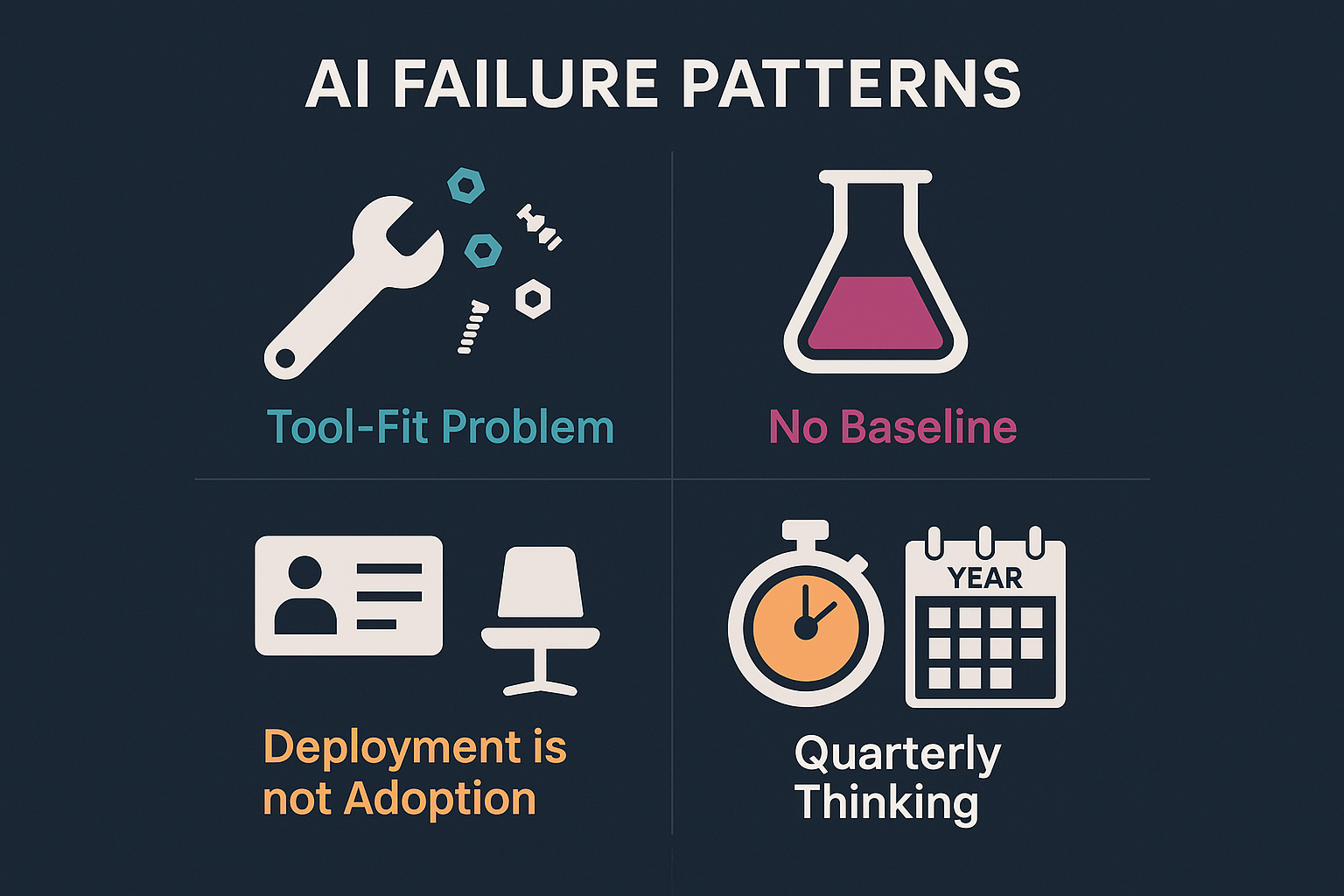

These aren’t edge cases. These are the default. After watching dozens of AI initiatives crash across industries, the same patterns show up so consistently they’re almost mechanical. If your organization is running any of these plays, the outcome is already written.

1. The Tool-Fit Problem

Leadership buys an enterprise AI platform, pushes it onto every team, and waits for the magic to happen. Six months later, adoption is flat and someone writes a memo about how “AI didn’t work here.”

AI never had a chance. The organization tried to force a single solution onto workflows it was never designed to fit. This is the one-size-fits-all fallacy: the belief that one AI tool can serve every function, every role, every use case. It can’t. A financial analyst, a recruiter, and a project manager don’t do the same work. Why would the same AI configuration serve all three?

The organizations getting real value aren’t deploying monolithic AI platforms. They’re building individual, purpose-fit agents tuned to specific workflows. The difference between “we gave everyone Copilot” and “we built an AI that actually understands how our underwriting team works” is the difference between shelfware and transformation.

2. Experiments Designed to Fail

Companies are running billion-dollar AI pilots with less scientific rigor than a 4th-grade science fair project [20].

No baseline. No control group. No clear hypothesis. Most organizations have no idea how long their current processes take, how many steps are involved, or where the actual bottlenecks are. Then they deploy AI and claim “30% improvement.” Thirty percent of what? Compared to what? Measured how?

You learned this in middle school: you can’t measure change without measuring the starting point. You can’t attribute causation without isolating variables. You can’t draw conclusions from a sample size of one.

Harvard Business Review documented this in August 2025: companies are running experiments that cannot succeed because they were never designed to produce valid results [17]. When the pilot “fails,” leadership concludes AI isn’t ready. What they should conclude is that their experiment was broken.

3. Measuring Deployment, Not Adoption

The dashboard shows 10,000 licenses provisioned. Leadership calls it a success.

But how many people are actually using it? And what are they using it for? If the answer is “writing emails and summarizing meeting notes,” that’s not AI adoption. That’s a $50-per-seat spell checker. Provisioning licenses shouldn’t be a success metric. At this point, it should be table stakes.

The real questions are harder: Are people building new workflows around AI capabilities? Are those workflows spreading organically to other teams? Is anyone doing work that wasn’t possible six months ago? Tool rollout is easy. Behavior change is hard. Most companies measure the easy thing and declare victory while ignoring the hard thing entirely.

4. Quarterly Thinking

AI is an infrastructure-level shift. It takes years to integrate, optimize, and realize value. But public companies think in quarters. If the ROI doesn’t show up in two earnings cycles, the project gets cut.

When a leader says “AI is not ready” or “AI didn’t perform to what we thought it would,” that’s not a verdict on the technology. That’s a tell. It signals leadership that doesn’t understand what AI is capable of doing today, or how transformative technology actually matures. The leaders who said the same thing about cloud in 2011 are the ones who spent the next decade playing catch-up.

This isn’t AI failure. This is impatience masquerading as analysis.

What to Measure Instead

If ROI is the wrong metric, what is the right one?

Start with adoption velocity. Not how many licenses you bought, but how quickly usage spreads organically. When one team builds a workflow and three other teams adopt it without being told to, that’s signal. When an employee builds an AI-assisted process and becomes the go-to person for their department, that’s signal. When people start solving problems they previously escalated or ignored, that’s signal.

Measure what people are actually doing with AI. Not just “are they logging in” but “are they changing how they work?” Are they developing workflows that others organically adopt? Are they finding use cases nobody anticipated? The best AI adoption metric isn’t a dashboard. It’s the stories that start spreading through the organization: “Did you see what Sarah’s team built?”

Track workflow integration: how many processes have been redesigned around AI capabilities, not just augmented with them. Measure time-to-competency: how long until a new user becomes productive with the tool. Watch for the ambassadors: the employees who become their own distribution channels for AI-driven value, teaching colleagues, sharing templates, building on each other’s work.

Most importantly, measure optionality. AI isn’t just about doing today’s work faster. It’s about what becomes possible tomorrow. The companies that treated cloud computing as an efficiency play got some cost savings. The companies that treated it as a capability platform built entirely new businesses. The same will be true for AI.

The Cloud Parallel: A Playbook in Repeat

Let me be specific about what happened with cloud computing, because the parallels are almost embarrassing.

In 2010, when Gartner sent cloud into the Trough of Disillusionment, enterprise adoption was around 20%. Security concerns dominated every conversation. Compliance teams blocked projects. CFOs demanded ROI projections that nobody could deliver. The same articles we’re reading about AI today were being written about cloud then, word for word.

By 2015, cloud adoption had crossed 50%. By 2020, it was over 90%. Today it sits at 94% [18]. The Trough of Disillusionment lasted about four years. The companies that kept investing through the skepticism built capabilities their competitors are still trying to replicate.

The pattern is mechanical. New technology arrives. Expectations inflate. Reality disappoints. Skeptics declare failure. Patient investors build capability. Eventually, the technology matures, adoption spreads, and productivity follows. Every single time.

But each time, it happens faster. Electricity followed this curve over 40 years. Cloud computing followed it in under a decade. AI is following it now, and the curve is steeper than anything we’ve seen.

The Measurement Trap

When you demand immediate ROI from AI, you bias your organization toward small, safe projects. Automate a single report. Summarize a few documents. Cut a minor cost. These projects can show a return. They also don’t matter.

The transformative applications, the ones that actually change how work gets done, don’t fit in an ROI spreadsheet. How do you calculate the return on having an AI that can reason through problems your team hasn’t encountered yet? How do you value the ability to experiment with capabilities that don’t exist in your current workflow?

You don’t. You can’t. And that’s the point.

Your Move

You have a choice. You can treat AI like a cost center to be optimized. Run the pilots. Demand the ROI. Kill the projects that don’t deliver immediate returns. You’ll be right about the metrics and wrong about the future.

Or you can treat AI like the infrastructure shift it is. Invest in capability. Measure adoption, not deployment. Accept that the productivity gains will come on a timeline you don’t control.

The telephone took 70 years to reach mass adoption. Cloud took a decade. AI is moving faster than either. And the same publications declaring it a failure today declared cloud a failure in 2010.

They were wrong then. They’re wrong now. The only question is which side of that bet you want to be on.

Sources

-

The GenAI Divide: State of AI in Business 2025 — MIT NANDA Research Team, Fortune, August 2025. 95% of enterprise GenAI pilots fail to deliver profits or cost cuts.

-

VotE: AI & Machine Learning Survey 2025 — S&P Global Market Intelligence, 2025. 42% of companies abandoned most AI initiatives in 2025, up from 17% in 2024.

-

Gartner: 30% of GenAI Projects Will Be Abandoned After Proof of Concept by End of 2025 — Gartner, July 2024.

-

IBM CEO Study 2024 — IBM Institute for Business Value, 2024. 79% of CEOs perceive AI gains; only 29% can actually measure ROI.

-

NTT DATA AI Survey 2024 — NTT DATA, 2024. 70–85% of GenAI enterprise deployments fail to meet desired ROI.

-

Hype Cycle for Cloud Computing 2010 — Gartner, 2010. Cloud computing positioned at Peak of Inflated Expectations entering Trough of Disillusionment.

-

It’s official: ‘Cloud computing’ is now meaningless — InfoWorld, August 2011.

-

Top 5 disappointments as cloud computing enters the Trough of Disillusionment — Computerworld, October 2010.

-

Cloud Computing’s ROI Increasingly Elusive, Survey Finds — Joe McKendrick, Forbes, February 2013.

-

Is ROI the Right Measure of Cloud Success? — Gartner Group via Forbes, June 2012.

-

The Solow Productivity Paradox: What Do Computers Do to Productivity? — Brookings Institution. Robert Solow’s 1987 observation: “You can see the computer age everywhere but in the productivity statistics.”

-

AI and Past Tech Disruptions Inform Economic Impact — EY, 2025. IT investments from the 1970s–80s paid off in the late 1990s, 10–20 years later.

-

Thousands of CEOs admitted AI had no impact on employment or productivity — Fortune, February 2026. Economists resurrecting the Solow Paradox to explain AI’s current measurement gap.

-

Beyond ROI: Are We Using the Wrong Metric in Measuring AI Success? — UC Berkeley Executive Education, September 2025.

-

Overcoming the Organizational Barriers to AI Adoption — Harvard Business Review, November 2025. Fear, rigid workflows, and entrenched power structures quietly derail AI initiatives.

-

AI Adoption Barriers — Harvard Business School Online, 2024. Moderna CEO Stephane Bancel: “The biggest challenge to becoming an AI company is a change management challenge.”

-

Beware the AI Experimentation Trap — Harvard Business Review, August 2025. Companies run experiments without baselines or control groups — designed to fail from the start.

-

State of the Cloud Report 2024 — Flexera, 2024. 94% of enterprises use cloud computing.

-

Technology adoption timeline data — Multiple sources: DataTrek Research; UBS/Swiss Re via PYMNTS (ChatGPT reaching 100M users in two months); Harvard Business Review adoption curve research.

-

Five AI Fails — and How to Avoid Them — MITRE, 2025. Catalogues the most common patterns that cause AI initiatives to fail in enterprise environments.