One AI to Rule Them All? Why the Monolith Model Is Breaking Enterprise AI

One AI to Rule Them All? Why the Monolith Model Is Breaking Enterprise AI

When the Tool Becomes the Test

February 2026. Accenture senior managers receive an internal email: AI tool usage will be tracked weekly and factored into promotion decisions. The Financial Times reports that “use of our key tools will be a visible input to talent discussions,” directly impacting summer promotions. CEO Julie Sweet had already warned that employees unable to adapt to AI would be “exited” from the company. (The Decoder; TechRadar)

Three months later, senior consultants are calling the mandated tools “broken slop generators.” One employee tells reporters they would “quit immediately” if the rule directly affected them. The company that advises Fortune 500s on digital transformation is watching its own talent contemplate exit over a technology mandate.

This isn’t an isolated incident. It’s a pattern, and we’ve seen it before.

We’ve Been Here Before

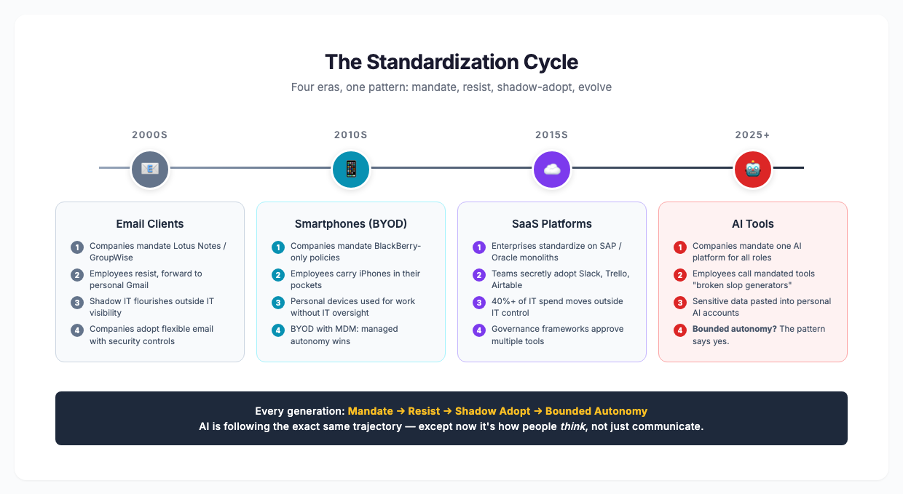

In the early 2000s, enterprises mandated standardized email clients, locking employees into Lotus Notes or GroupWise while the world moved to web-based alternatives. Adoption was grudging. Productivity dropped. People forwarded work to personal Gmail accounts because the mandated tools didn’t serve them.

A decade later, the same pattern emerged with smartphones. Companies tried to mandate BlackBerry-only policies while employees carried iPhones in their pockets. The result was the BYOD (Bring Your Own Device) movement, a hard-won acknowledgment that people work better with tools they choose. IT departments fought it for years before conceding that managed autonomy outperformed forced standardization.

The SaaS revolution repeated the cycle. Enterprises standardized on monolithic platforms like SAP or Oracle while teams secretly adopted Slack, Trello, and Airtable because those tools fit their actual workflows. Gartner estimates that 30-40% of IT spending in large enterprises occurs outside of IT’s control (CIO.com; Gartner). The eventual solution? Governance frameworks that approved multiple tools rather than mandating one.

Email clients. Smartphones. SaaS platforms. Every time, the pattern is the same: mandate one tool, encounter resistance, watch people find workarounds, eventually concede that autonomy within guardrails works better. AI is following the exact same trajectory, but the stakes are higher and the pace is unprecedented. AI isn’t just a communication tool or a project tracker. It’s how people think.

The Bigger Pattern

General Electric learned this lesson at a cost of over $7 billion. Their Predix platform, marketed as “the operating system for the industrial internet,” was supposed to standardize how every GE division handled industrial IoT. Engineers were expected to abandon their specialized tools for a one-size-fits-all interface. Adoption was minimal. Revenue projections of $15 billion fell short by approximately $14 billion. GE eventually spun off the entire digital division at a fraction of its investment. (Platform Engineering; Applico)

The reason wasn’t bad technology. It was the assumption that standardization could override how professionals actually work. GE’s engineers had deep, personal relationships with their specialized tools, each one calibrated to specific industrial contexts. Predix asked them to trade expertise for uniformity. They refused.

This isn’t about bad policy at one company. It’s about a fundamental misunderstanding of how humans work with technology, and what happens when we ignore what 2,500 years of philosophy and decades of organizational behavior research have been telling us about human autonomy.

The Monolith Trap

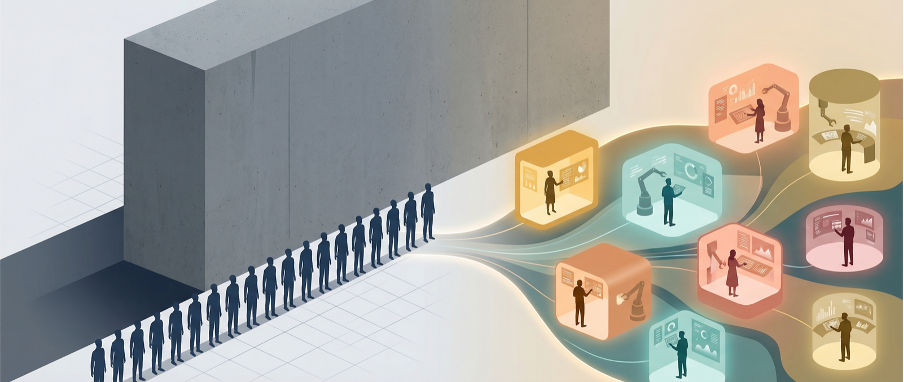

Most companies approach AI the same way:

- Select one platform (Microsoft Copilot, Google Workspace AI, OpenAI Enterprise, or a niche vendor)

- Roll it out company-wide (same interface, same features, same workflow)

- Mandate adoption (mostly useless training sessions, usage targets, and executive sponsorship where there’s more leadership “buyin” than actual users)

- Measure success by vanity metrics (logins, features used, hours spent, numbers that look good in a deck but say nothing about value created)

This model assumes humans are interchangeable, that an engineer, a marketer, a strategist, and a sales lead all need the same AI capabilities delivered the same way.

Henry Ford famously said in 1909, and later wrote in his autobiography My Life and Work (1922): “Any customer can have a car painted any color that he wants, so long as it is black.”

Ford wasn’t being stubborn. He was optimizing for mass production. Black paint dried fastest, cost least, and enabled the assembly line efficiency that made the Model T affordable. It was a brilliant decision for manufacturing. But knowledge work isn’t manufacturing. People’s minds don’t roll off an assembly line, and treating them like they do is how you end up with 95% of your AI pilots failing.

The results are predictable. Gallup’s 2024 State of the Global Workplace study found that only 23% of employees worldwide are truly engaged in their work, while organizations that foster employee autonomy see engagement increase by up to 25% and productivity rise by 20-21%. (Gallup) The cost of disengagement in the U.S. alone is approximately $2 trillion in lost productivity annually.

But here’s where it gets nuanced: standardization isn’t inherently bad. It creates consistency, reduces training costs, and simplifies support. The problem isn’t standardization itself. It’s the assumption that standardization and autonomy are mutually exclusive. They’re not. The companies that get AI right will build frameworks that provide both.

The Self Is Not a Template

Søren Kierkegaard was a Danish philosopher in the 1840s. Think of him as the original existentialist, a man who spent his career arguing that you can’t reduce a human being to a system. In The Sickness Unto Death (1849), he wrote that the self is “a relation that relates itself to itself.” In plain language: you are not a fixed thing. You’re an ongoing process of becoming. Your cognitive style, your creative process, your professional identity are unique and evolving. Forcing everyone through the same AI interface doesn’t augment individuals. It flattens them.

Kierkegaard argued that the attempt to standardize the self creates what he called despair — not sadness, but a deeper disconnection from who you actually are. When a company mandates one AI tool for every role, it’s not just an inconvenience. It’s telling people: your way of thinking doesn’t matter. Use ours.

This isn’t an abstract philosophical argument. It’s a diagnostic framework that explains why Accenture’s senior consultants are calling their mandated tools “broken slop generators.” Those tools might work fine, but they don’t work for them. And that distinction matters.

Carl Jung, the Swiss psychiatrist whose work on personality types eventually became the foundation for the Myers-Briggs framework (which, love it or hate it, is used by 88% of Fortune 500 companies), described a process he called individuation: the integration of conscious and unconscious elements into a whole, distinct self. Each person’s path to effective work is different. An engineer’s relationship with AI should look nothing like a marketer’s, which should look nothing like a strategist’s. Tools that support individuation enhance human potential. Tools that enforce conformity limit it.

Friedrich Nietzsche, writing in Thus Spoke Zarathustra (1883-1885), described the “will to power” not as domination but as self-overcoming: the drive to grow, create, push beyond your current limits. A monolith AI doesn’t enable self-overcoming. It enables compliance. Individual AI relationships ask a fundamentally different question: “What are you trying to become? Let me help you get there.”

Jean-Paul Sartre declared that “existence precedes essence,” meaning we define ourselves through choices and actions, not through categories imposed on us. A one-size-fits-all AI pre-defines how you should work. Individual AI respects that your workflow, priorities, and creative process are yours to define.

These philosophers aren’t decorative. They’re explaining a pattern that’s been consistent for 2,500 years: when systems deny human agency, humans resist — consciously, subconsciously, and every single time.

What the Research Actually Shows

MIT’s “GenAI Divide: State of AI in Business 2025” report found that 95% of enterprise generative AI pilot programs fail to deliver measurable business impact or scale to production. The reason isn’t the technology. It’s an execution strategy. Companies that succeed treat AI as augmentation within human workflows, not as replacement of human judgment. (MIT/MLQ; AI Magazine)

The successful 5%? They prioritize deep workflow integration, domain-specific applications, and iterative learning. In other words, they let teams and individuals shape how AI fits their work rather than mandating a uniform approach.

Gallup’s meta-analysis across 456 studies, 276 organizations, 54 industries, and 96 countries confirmed that employee engagement drives 11 key performance outcomes including profitability and productivity. Their 2024 report found that highly engaged teams show 23% higher productivity. And autonomy is one of the strongest drivers of engagement. (Gallup)

The World Economic Forum’s Future of Jobs Report 2023, surveying 803 companies employing over 11.3 million workers across 45 economies, identified technology adoption as the primary driver of business transformation while simultaneously finding that companies offering flexible work models experience significantly higher retention and satisfaction. (WEF)

The research is consistent: when employees have agency in how they work, they perform better. When they’re forced into standardized molds, they comply but they don’t commit. And in knowledge work, the gap between compliance and commitment is the gap between mediocrity and excellence.

Bounded Autonomy: How It Actually Works

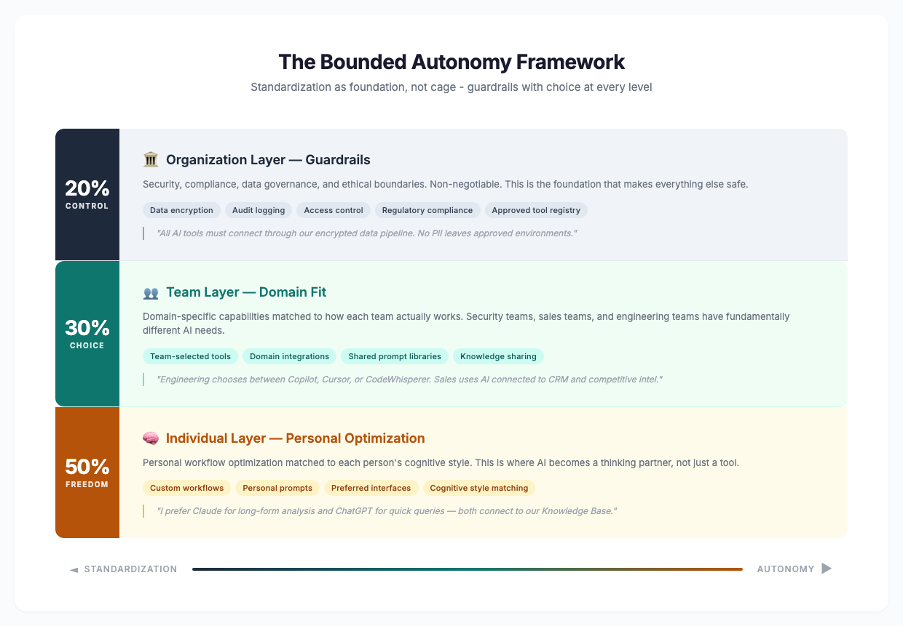

The solution isn’t abandoning standardization. It’s layering it intentionally:

The Organization Layer (Guardrails)

This is where responsible data handling lives. We have a collective obligation to protect the information that can hurt people: personally identifiable information, health records, financial data. That’s not negotiable, and no amount of innovation should compromise it.

But let’s be honest about what’s actually happening. When enterprises build walls, people build tunnels. When enterprises block tunnels, people build exits. We’ve watched this cycle repeat for decades, and AI has accelerated it to the point where rigid boundaries are becoming impossible to enforce. If we haven’t been able to stamp out unsanctioned tool use in thirty years of trying, what makes us think mandating a single AI platform will work now?

Maybe it’s time to challenge the notion of hard boundaries entirely. Rather than asking “how do we stop people from using unauthorized tools,” the better question is “how do we make the authorized path so compelling that people choose it willingly?” The organizations getting this right aren’t building higher walls. They’re building better doors.

The Team Layer (Domain Fit)

Different teams have fundamentally different AI needs. A security team needs AI tuned to CVE databases (Common Vulnerabilities and Exposures, the standardized catalog of known security flaws), threat intelligence feeds, and incident response patterns integrated with their SIEM (Security Information and Event Management, the system that aggregates and analyzes security alerts across the organization). A marketing team needs AI for content creation, audience analysis, and campaign optimization. These are not the same tool, and pretending they are wastes everyone’s time.

This is where “why should I care?” gets answered with action instead of theory. When a security analyst can ask their AI “Show me all CVEs from the last 30 days affecting our tech stack, ranked by exploitability” and get an answer in seconds instead of hours, that’s not an incremental improvement. That’s a fundamentally different relationship with their work. When a sales lead walks into a client meeting with a briefing synthesized from CRM data, recent news, and competitive intelligence without having spent two hours preparing it, that’s not a feature. That’s freedom to focus on what actually requires human judgment: the relationship itself.

The Individual Layer (Personal Optimization)

This is where the real transformation happens, and where most enterprises get scared. Individual customization within team and organizational boundaries. Personal prompt libraries. Custom workflows. Preferred interfaces. The ability to say “I prefer Claude for long-form analysis and ChatGPT for quick queries, both connected to our Knowledge Base.”

Kierkegaard’s insight comes alive here. Each person’s cognitive style is different. Some people think in outlines. Others think in conversations. Some need visual mapping. Others need structured data. The individual layer respects that your way of working is valid and gives you tools that match it.

This isn’t chaos. It’s the same principle behind letting developers choose their IDE, letting writers choose their word processor, letting designers choose their creative suite. Professionals do better work with tools that fit how they think.

Living Proof: My Shift from Monolith to Multiplicity

I didn’t start here. Like most people in technology, I rode the wave. ChatGPT launched and I was all in. Then Anthropic released Claude and I experimented with that. Then Gemini, then Llama, then the next thing. Each new model brought the race for better reasoning, better benchmarks, better everything, and I was right there in the wake of it, testing every tool against every task, trying to find the one that did it all.

What I found instead were the limitations.

No single tool could hold the full context of what I do. I’m responsible for mentoring, selling, and upskilling across an entire consulting practice with hundreds of people across offices, each with different needs, different clients, different growth trajectories. One AI couldn’t switch between helping me prepare for a client presentation, tracking competitive intelligence, drafting thought leadership, and managing personal logistics without losing the thread.

So I made the shift. Not to a bigger, better single tool, but to specialized outcomes achieved by intelligent workflows and simple automation. One system knows my full professional context across 30 years in software and helps me synthesize across responsibilities. Another monitors my professional network for market intelligence and surfaces opportunities before they’re publicly visible. Another tracks client relationships, proposal timelines, and engagement risks.

Each one has boundaries. And that’s by design. The same way it is with humans. Imagine if you took Einstein and asked him to focus on fifty different things instead of giving him the guardrails to go deep into physics. The greatest individuals in history across science, technology, sports, literature, and art achieved what they did in part because of focus. Sometimes that focus came from circumstance, sometimes from limitation, sometimes from sheer will. But the principle holds: depth requires boundaries. And the same bounds that enterprises are trying to eliminate are the same guardrails that have produced some of the greatest outcomes in human history.

Together, these specialized systems extend my ability to think across the many competing demands of leadership in ways that a single tool never could. They don’t replace my judgment. They give me better inputs for it.

I’m not special. The tools I use are available today. The integration effort is modest. What’s uncommon is the mindset shift: from “which AI should I use?” to “what does my work actually need, and how can AI be shaped to serve that?”

That shift is available to anyone. You don’t need a team of AI agents. You might just need one tool, configured thoughtfully for your specific work, with prompts that understand your context, connected to the data that matters for your role, and calibrated to your cognitive style.

The question isn’t whether you can afford to do this. It’s whether you can afford not to, while the people around you are building AI relationships that make them 20-25% more productive (Gallup) and 95% of standardized AI pilots continue to fail (MIT).

The Customer Paradox: Billions for Them, Compliance for Us

Companies understand individualized AI for customers. They’ve been investing in it for decades:

Amazon’s recommendation engine drives an estimated 35% of the company’s revenue. Hundreds of billions of dollars generated by personalizing the experience for each individual customer based on their unique browsing and purchase history.

Netflix’s personalization algorithms save an estimated $1 billion annually in reduced churn by surfacing content tailored to individual viewing patterns.

Stitch Fix built its entire business model on combining AI with human stylists, using individual preference data to curate personalized fashion selections.

These companies invest billions because personalization drives measurable business results. The return on individualized customer AI is so well-established that no serious executive would propose giving every customer the same generic experience.

Now here’s the question that should keep CIOs up at night: If individualized AI generates billions in customer revenue, what’s the cost of NOT individualizing it for employees?

Think about that gap for a moment. Companies pour billions into personalizing customer experiences for people who interact with them for minutes per day. But the employees who spend 8-10 hours daily creating the actual value? They get one standardized tool and a login tracker. The people generating the revenue get less investment in their productivity than the people spending it.

Businesses are legal and accounting constructs. They don’t think, they don’t create, they don’t solve problems, they don’t build relationships. Humans do all of that. Every dollar of that customer revenue, every client relationship, every strategic decision is made by a human being. The same principles that make personalized customer experiences profitable — matching individual preferences, adapting to unique contexts, respecting autonomy — apply with even greater force to the people who actually run the business.

How do we close the gap between customer-facing value and employee-bound potential? That’s the real question. And it’s costing companies more than they realize in engagement, retention, and the innovation they’ll never see because their best people are fighting tools instead of using them.

McKinsey’s 25,000 Agents: Read the Fine Print

McKinsey deployed 25,000 AI agents alongside 40,000 human consultants. CEO Bob Sternfels confirmed the numbers in early 2026. They report 2.5 million charts generated in six months and 1.5 million hours saved on search and synthesis tasks. They’re aiming for a 1:1 agent-to-human ratio within 18 months. (India Today; ByteIota)

On the surface, this looks like proof that monoliths work. But read the fine print.

McKinsey’s agents handle standardized work: document preparation, data compilation, research synthesis, chart generation. The tasks that were previously delegated to junior consultants. Their “25-squared” model explicitly aims to increase client-facing roles by 25% while reducing non-client-facing roles by the same amount.

The humans handle individualized work: relationship building, creative problem-solving, strategic judgment. The work that actually creates client value.

Even McKinsey, with unlimited resources and a culture built on standardization, uses AI to automate the uniform tasks so humans can do the individualized work that matters. Their success isn’t proof that monoliths replace human judgment. It’s proof that when you free humans from standardized tasks, they perform better at the individualized work where value is created.

Technology should augment human capability, not replace human judgment. The organizations that understand this distinction are the ones building lasting competitive advantage. The ones that don’t are the 95%.

The Call to Action: Build Your Own AI Practice

Here’s where I’m going to be direct.

If your company has already embraced AI and is giving you room to build your own practice with it, excellent. Push for the tools that fit your role. Share what works with your team. Help your organization move from “one tool for everyone” to “guardrails with choice.”

If your company is mandating a single AI tool and measuring your compliance by login frequency, you have a decision to make. You can comply, check the box, and miss the transformation. Or you can advocate for change, demonstrate what individualized AI can do, and help your organization evolve.

And if your company isn’t using AI at all? If leadership sees it as a threat rather than an opportunity, and there’s no path to getting tools approved that could help you do more meaningful work?

Then maybe it’s time to ask whether that company is building toward the future or clinging to the past. Companies not actively promoting employee growth through AI tools are companies that will be drastically disrupted in the coming years. The gap between AI-enabled professionals and those working without it is widening every month. That’s not speculation. It’s the 23% productivity advantage that Gallup measures, compounding over time.

This isn’t about being disloyal. It’s about being honest with yourself. Your professional growth matters. Your ability to do fulfilling, impactful work matters. If the place you work won’t give you the tools to thrive, you owe it to yourself to find one that will.

Start simple. Pick one AI tool. Use it daily for your actual work, not as a novelty, but as a thinking partner. Configure it for your specific context. Build prompts that understand your domain. Connect it to your data where you can. Make it yours.

Then notice the difference. Notice how much faster you synthesize information. Notice how much more time you have for the work that requires your human judgment, creativity, and relationships. Notice the gap between what you can do with a personalized AI practice and what you could do without one.

That gap is your future. Build it.

Sources and Citations

Accenture AI Mandate:

- The Decoder: “Accenture ties promotions to AI tool usage while some employees call the tools ‘broken slop generators’”

- TechRadar: “Accenture tells workers getting a promotion will require regular adoption of AI”

- Financial Times (original reporting, referenced in above sources)

GE Predix Platform:

- Platform Engineering: “How General Electric Burned $7 Billion on Their Platform”

- Applico: “Why GE Digital Failed”

Henry Ford Quote:

- Ford, Henry. My Life and Work. 1922. Co-written with Samuel Crowther. The quote refers to the Model T production era (1914-1925), when black paint was chosen for its fast drying time and low cost, enabling assembly line efficiency.

Shadow IT:

- CIO.com: “How to Eliminate Enterprise Shadow IT”

- Gartner estimates 30-40% of IT spending in large enterprises occurs outside IT control (Josys/Gartner)

McKinsey AI Deployment:

- India Today: “McKinsey CEO says 25,000 employees in his company are AI agents” (Jan 2026)

- ByteIota: “McKinsey 25K AI Agents”

MIT AI Failure Research:

- MIT: “The GenAI Divide: State of AI in Business 2025”

- MLQ.ai: “MIT Study: 95% of Generative AI Pilots Fail”

- AI Magazine: “MIT: Why 95% of Enterprise AI Investments Fail to Deliver”

Gallup Employee Engagement:

- Gallup: “Employee Engagement Drives Growth”

- Gallup: 2024 State of the Global Workplace (456 studies, 276 organizations, 54 industries, 96 countries)

- Key findings: Autonomy increases engagement up to 25%; highly engaged teams show 23% higher productivity; disengagement costs U.S. ~$2 trillion annually

World Economic Forum:

- WEF: Future of Jobs Report 2023 (803 companies, 11.3 million workers, 45 economies)

Philosophical Sources:

- Kierkegaard, Søren. The Sickness Unto Death. 1849.

- Jung, Carl. The Archetypes and the Collective Unconscious. 1934.

- Nietzsche, Friedrich. Thus Spoke Zarathustra. 1883-1885.

- Sartre, Jean-Paul. Being and Nothingness. 1943.